This Entropy word search explores one of the most fascinating and fundamental concepts in physics. Entropy, a measure of disorder and randomness within a system, was first formally defined by German physicist Rudolf Clausius in 1865. He introduced the term to describe how energy transforms irreversibly during thermodynamic processes, forever changing our understanding of the physical world.

Later, Austrian physicist Ludwig Boltzmann expanded the concept by connecting microscopic particle behavior to macroscopic properties through statistical mechanics. His groundbreaking equation revealed that entropy reflects the number of possible arrangements particles can adopt within any given system. This discovery applies everywhere in nature, from melting ice cubes to the eventual fate of the entire universe.

Entropy matters because it governs the direction of all natural processes. The second law of thermodynamics tells us that entropy in isolated systems always increases, explaining why energy gradually becomes less useful over time. Did you know that this principle is actually why time itself appears to move only forward?

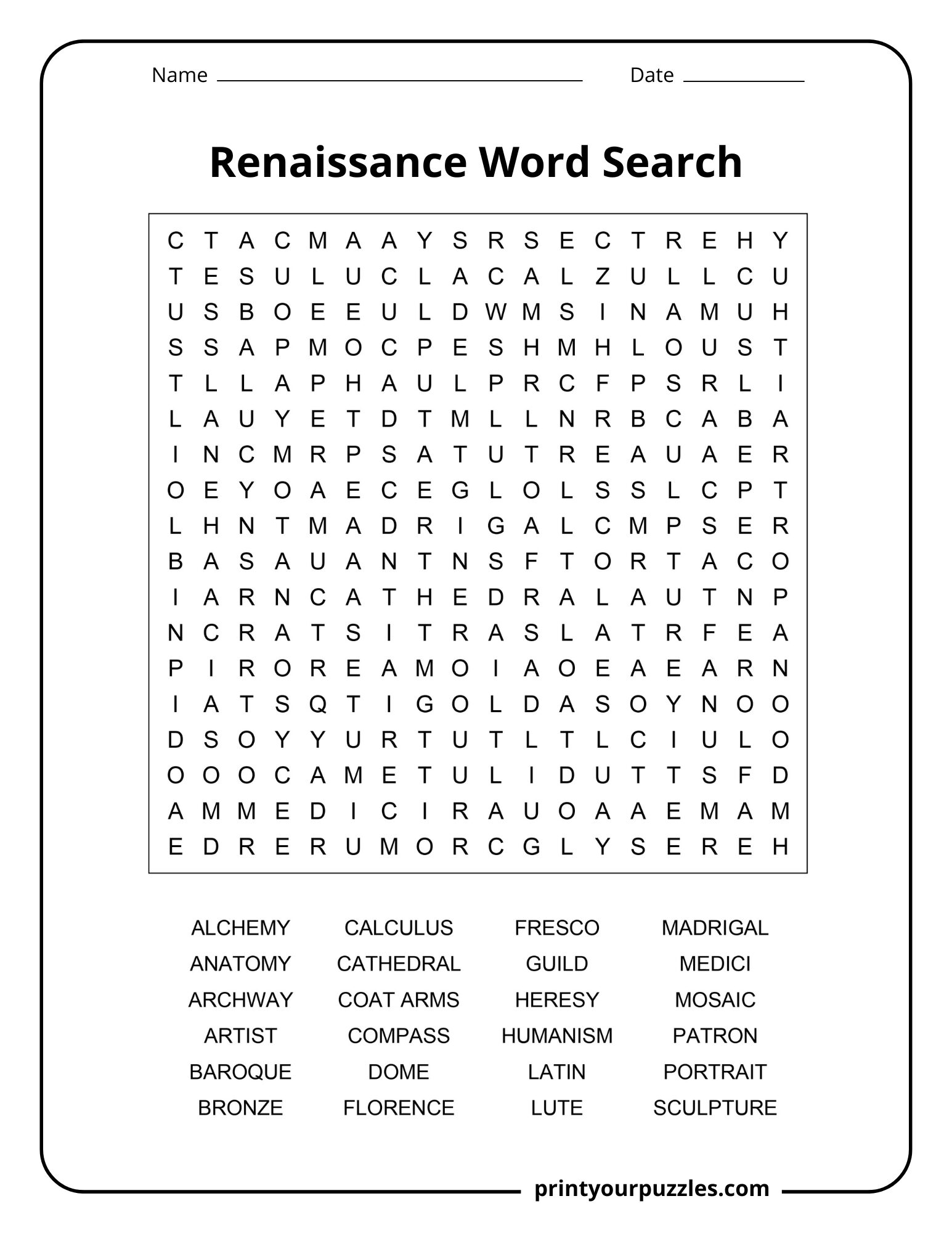

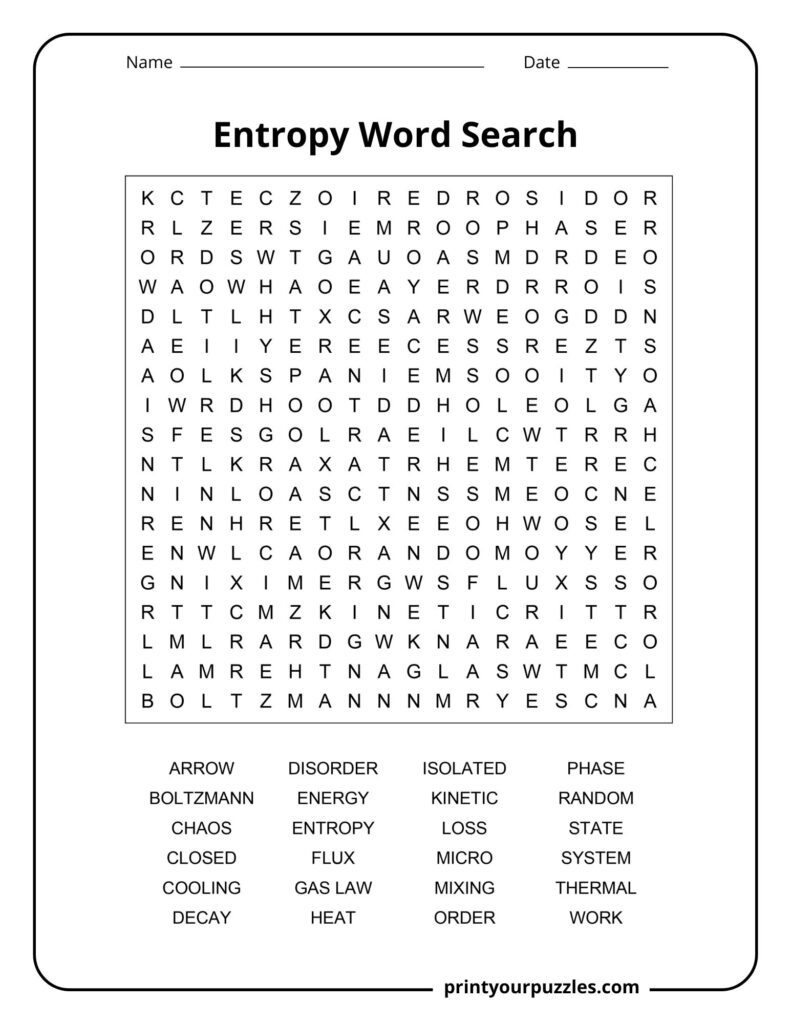

This Entropy word search printable features 24 carefully selected keywords that cover essential topics including thermodynamic laws, energy transfer, and statistical mechanics. Each word comes with a detailed definition to deepen your understanding while you solve the puzzle.

Beyond the grid, this word search printable also includes a FAQ section answering the most common questions about entropy, along with a curious Did You Know? section filled with surprising facts. Together, these elements make this puzzle both entertaining and genuinely educational.

ARROW, BOLTZMANN, CHAOS, CLOSED, COOLING, DECAY, DISORDER, ENERGY, ENTROPY, FLUX, GAS LAW, HEAT, ISOLATED, KINETIC, LOSS, MICRO, MIXING, ORDER, PHASE, RANDOM, STATE, SYSTEM, THERMAL, WORK

ARROW — Refers to the “arrow of time,” a concept indicating that time flows in one direction, from past to future, driven by increasing entropy in the universe.

BOLTZMANN — Ludwig Boltzmann, Austrian physicist who formulated the statistical definition of entropy, linking microscopic particle arrangements to macroscopic thermodynamic properties through his famous equation S equals k log W.

CHAOS — A state of complete disorder and unpredictability closely associated with entropy. As entropy increases in a system, organized structures break down into increasingly chaotic arrangements.

CLOSED — A closed system exchanges energy but not matter with its surroundings. Entropy in closed systems tends to increase over time according to the second law of thermodynamics.

COOLING — The process of heat loss from a body. As objects cool, energy disperses into the environment, increasing overall entropy even though local temperature decreases and order may temporarily emerge.

DECAY — The gradual deterioration or breakdown of organized matter and energy into less ordered forms. Radioactive decay and material degradation are natural examples of entropy increasing over time.

DISORDER — A fundamental concept tied to entropy, representing the number of possible microscopic configurations of a system. Higher disorder means higher entropy, reflecting greater randomness in particle arrangements.

ENERGY — The capacity to perform work. Entropy describes how energy disperses and becomes less available for useful work, spreading from concentrated forms into diffuse, unusable heat.

ENTROPY — A thermodynamic quantity measuring the degree of disorder or randomness in a system. It quantifies energy unavailable for work and always tends to increase in isolated systems.

FLUX — The rate of flow of energy or matter through a surface. Entropy flux measures how much entropy is transported across system boundaries through heat transfer or mass exchange processes.

GAS LAW — Mathematical relationships describing gas behavior, connecting pressure, volume, and temperature. These laws help calculate entropy changes when gases expand, compress, or undergo thermal processes.

HEAT — Thermal energy transferred between bodies at different temperatures. Heat transfer is a primary mechanism driving entropy increase, as energy flows spontaneously from hot regions to cold ones.

ISOLATED — A system that exchanges neither energy nor matter with its surroundings. The second law states that entropy in an isolated system never decreases, always reaching maximum at equilibrium.

KINETIC — Relating to the motion of particles. Kinetic energy of molecules contributes to entropy, as faster-moving particles create more possible microscopic states and greater disorder within a system.

LOSS — In thermodynamic processes, useful energy is inevitably lost as waste heat due to entropy. No real engine achieves perfect efficiency because some energy always degrades irreversibly.

MICRO — Short for microstate, referring to a specific arrangement of particles in a system. Entropy is calculated by counting the number of microstates corresponding to a given macroscopic condition.

MIXING — The spontaneous blending of different substances, like gases or liquids. Mixing increases entropy because combined molecules have far more possible arrangements than when kept separately.

ORDER — A structured, organized arrangement of components in a system. Entropy measures the tendency of systems to move from ordered states toward disordered ones, requiring energy input to maintain order.

PHASE — A distinct state of matter such as solid, liquid, or gas. Phase transitions involve significant entropy changes; for example, melting and evaporation increase entropy as molecules gain freedom.

RANDOM — Lacking pattern or predictability. Entropy quantifies randomness in a system; maximum entropy corresponds to the most random possible configuration where no discernible structure or pattern remains.

STATE — The complete description of a system’s thermodynamic properties including temperature, pressure, and volume. Entropy is a state function, meaning it depends only on current conditions, not history.

SYSTEM — A defined portion of the universe under study, separated from its surroundings by boundaries. Entropy analysis examines how disorder changes within the system and its surrounding environment together.

THERMAL — Relating to heat and temperature. Thermal equilibrium represents maximum entropy for interacting bodies, where no further spontaneous heat flow occurs because temperature differences have vanished completely.

WORK — Energy transferred by a force acting over a distance. Entropy limits the conversion of heat into work; the Carnot cycle defines the maximum theoretical efficiency any heat engine can achieve.

ARROW, BOLTZMANN, CHAOS, CLOSED, COOLING, DECAY, DISORDER, ENERGY, ENTROPY, FLUX, GAS LAW, HEAT, ISOLATED, KINETIC, LOSS, MICRO, MIXING, ORDER, PHASE, RANDOM, STATE, SYSTEM, THERMAL, WORK

Entropy is a thermodynamic measure of disorder or randomness within a system. It quantifies how much energy becomes unavailable for doing useful work, always tending to increase naturally.

The second law of thermodynamics states that isolated systems evolve toward maximum disorder. There are vastly more disordered states than ordered ones, making increasing entropy statistically inevitable.

Yes, entropy can decrease locally when energy is applied, like freezing water. However, the total entropy of the system plus its surroundings always increases overall.

Entropy explains why ice melts, rooms become messy, and machines lose efficiency. Every natural process generates waste heat and disorder, reflecting entropy’s constant increase around us.

Rudolf Clausius introduced the term entropy in 1865 to describe irreversible energy transformations. Later, Ludwig Boltzmann gave it a statistical interpretation connecting microscopic particle arrangements to macroscopic behavior.

Until the End of Time by Brian Greene. Brian Greene masterfully bridges the gap between complex physics and human wonder. This book provides a scientifically precise yet lyrical exploration of entropy and the cosmos, making the daunting “arrow of time” accessible to any curious mind.

Once an egg is scrambled, its molecules reach a highly disordered state. Reversing this process would require decreasing entropy, which nature simply doesn’t allow spontaneously.

Scientists predict a “heat death” where entropy reaches maximum. All energy will be evenly distributed, no temperature differences will exist, and absolutely nothing meaningful can happen anymore.

Living organisms maintain remarkable internal order by consuming food and expelling waste heat. Life is essentially a temporary, beautiful rebellion against the universe’s relentless march toward disorder.

The arrow of time exists because entropy always increases. We remember the past because it had lower entropy, and the future always holds greater disorder ahead.

Physicist Jacob Bekenstein and Stephen Hawking discovered that black hole entropy is proportional to their surface area, not volume, making them the most disordered objects known to exist.